In January, I wrote about what I called the AI Productivity Paradox: the METR study showing experienced developers were 19% slower with AI, while believing they were 20% faster. At the time, I treated it as a cautionary signal, one data point in a broader discussion about measuring performance.

Two months later, the signal has become a pattern. Three independent sources, from three different angles, point to the same conclusion: AI is fracturing the talent pipeline that produces the people who build software.

Let me be clear about what I mean by “talent pipeline.” I’m not talking about recruiting funnels or employer branding. I mean the entire chain: how engineers learn, how we evaluate them, and how the gap between effective and ineffective AI users keeps growing. All three are breaking at the same time, and they reinforce each other.

First, the baseline: everyone’s using AI

Before I get into the fractures, the baseline. The Pragmatic Engineer’s 2026 survey of roughly 1,000 software engineers:

- 95% use AI tools weekly

- 75% use AI for more than half their work

- 55% use autonomous agents regularly (63.5% among staff+ engineers)

- Claude Code went from zero to the #1 AI coding tool in eight months, overtaking Copilot and Cursor

This isn’t early adoption anymore. This is the water we swim in. The fractures I’m about to describe aren’t fringe concerns; they affect the basic mechanics of how our industry develops engineers.

Fracture 1: the skill tax

In February, Anthropic published an RCT (randomized controlled trial, rare in software engineering research) with 52 professional developers learning Python Trio, a concurrency framework none of them knew. I haven’t seen enough people talking about this one.

Half the group got a GPT-4o coding assistant. Half got documentation only.

The results:

| Finding | Data |

|---|---|

| Skill gap | AI users scored 17% lower on conceptual understanding (Cohen’s d=0.738, p=0.01) |

| No speed gain | No significant difference in task completion time (p=0.391) |

| Debugging gap | Docs-only group hit 3 median errors vs 1 for the AI group |

Read that last row again. The group without AI hit more errors, and that’s the point. They struggled, they debugged, and they came out the other side understanding the framework better. The AI group got working code faster, but when tested on whether they actually understood what they’d built, they scored significantly worse.

The study calls this the “skill tax”: a 17% learning penalty that applies regardless of experience level. AI doesn’t make you dumb. It removes the productive friction: the debugging, the wrong turns, the “why doesn’t this work?” moments that turn exposure into understanding.

The pattern analysis tells you where the split happens. Developers who scored well (65-86%) even with AI used it for explanations alongside code: “explain this concurrency model,” “why does Trio handle cancellation this way?” Developers who scored poorly (24-39%) delegated heavily, pasting outputs without engaging with the reasoning. Some spent 11 minutes out of a 30-minute task window just interacting with the assistant.

These aren’t abstract statistics. I recognized myself in those patterns. When I’m working in Go, a language I know deeply, I use Claude Code as a power tool. I challenge its suggestions, I know when it’s wrong, I can evaluate trade-offs. But when I dabbled in a new framework recently, I caught myself doing exactly what the low-scoring group did: accepting outputs, moving fast, feeling productive. The AI Perception Gap I wrote about in January goes deeper than speed. It’s about mistaking output for learning.

Now think about your junior developers. If they’re using AI for 75% of their work (which, per the Pragmatic Engineer survey, many are), and if the Anthropic study’s findings generalize, they may be producing code at an acceptable rate while building conceptual understanding 17% slower than they would otherwise. That compounds. Over months and years, it becomes a structural deficit in your team’s capability.

Fracture 2: hiring is broken

If Fracture 1 is about how engineers learn, Fracture 2 is about how we evaluate them. The same AI that weakens learning is also destroying the tools we use to assess it, and the timing couldn’t be worse.

A debate in the CTO Craft community (Andy Skipper’s newsletter, TMW #471) put words to what I’d been noticing. The hiring funnel is compromised at both ends.

On the input side: CVs are AI-optimized, cover letters AI-written. The signal-to-noise ratio on paper credentials has collapsed.

On the assessment side: candidates sail through take-home coding challenges (AI wrote the code) and then crumble when asked to defend their solutions. The take-home test, which I always considered a fairer alternative to whiteboard hazing, is now essentially a test of “can you prompt an AI and clean up the output?”

The community converged on a few conclusions that, frankly, I wish I’d reached sooner in my own career.

The discussion IS the interview. Let candidates use AI on the take-home (they will anyway). Then make the interview about explaining, defending, and modifying the solution under pressure. Can they change the error handling approach? Can they explain why they chose this concurrency pattern? If they can’t, the code isn’t theirs, regardless of who (or what) wrote it.

Pair programming beats everything else, specifically because AI can’t help you in real time the way it can on a take-home. It surfaces thinking process as it happens, and that’s almost impossible to fake. Yes, it’s expensive. Yes, it doesn’t scale. That’s exactly why it works.

And warm referrals have become the highest-signal filter, because they’re the one input AI hasn’t learned to game yet. Trusted networks provide more reliable signal than any automated screening. This one stings a little, because I spent years building structured hiring processes that were supposed to remove bias. Now the most reliable signal comes from knowing someone who knows someone.

This connects directly back to Fracture 1. If AI makes engineers learn slower (the skill tax), and AI also makes it harder to detect that slower learning (compromised assessments), you have a feedback loop. You hire people who look competent on paper, who produce acceptable code, but who lack the deep understanding that surfaces when things go wrong. And in software, things always go wrong.

I’ll be honest: at HikmaAI, we’re a small team, and our hiring process is informal enough that this hasn’t bitten us yet. But I’ve been on the other side of this equation many times in my career as CTO and VP Engineering, hiring hundreds of engineers. Looking back, about half of the evaluation methods I relied on would be useless today. That’s a sobering thought.

Fracture 3: the 99% problem

The third fracture is the hardest to see because it’s invisible from the inside.

Alberto Romero, writing in The Algorithmic Bridge, analyzed OpenAI’s usage data and found something striking: power users (the top 5% of paid users) use “thinking capabilities” 7x more than the median paid user. With roughly a billion ChatGPT users total, that 5% of 50 million paid accounts translates to about 2.5 million people, roughly 0.25% of the entire user base, getting the kind of performance that makes headlines.

For the other 99%+, AI feels… fine. Useful, maybe. A bit faster at some things. But nothing like the transformation the hype cycle promises. And the gap isn’t about access; everyone has the same tools. It’s about usage patterns.

For engineering teams, this means identical tool access produces completely different outcomes. Two developers on your team, same IDE, same AI subscription, same codebase. One uses extended thinking, structures prompts carefully, provides context, iterates. The other types “write a function that does X” and accepts the first output. The gap between them isn’t 10% or 20%. It’s an order of magnitude.

And it compounds. The developer who uses AI well builds better mental models, learns which problems AI handles well and which it doesn’t, develops taste for AI-generated code. The one who uses it poorly never develops that taste because they never see the gap between what they got and what was possible.

“AGI is already here,” Romero writes, “it’s just not evenly distributed.” The difference isn’t whether you use AI. It’s how.

I see this at HikmaAI and Wishew too, though on a smaller scale. I’ve spent months building modular skills, quality gates, and review pipelines for how I work with Claude Code. When I watch someone else use the same tool without that scaffolding, it’s like watching someone use Excel for its calculator function and never discovering pivot tables. Same software, completely different results.

The strategic frame: where this all fits

Luca Rossi (Refactoring newsletter) recently proposed what he calls the New Pyramid of Software Engineering, based on 350+ team surveys and 100+ CTO conversations. It’s a three-layer model where each layer only works if the one beneath it is solid:

| Layer | What | Prerequisite |

|---|---|---|

| 1. Developer Experience (base) | CI/CD, tooling, environments, onboarding | None, always invest |

| 2. AI (middle) | AI tools, workflows, spec-driven development | Good DX foundation |

| 3. Product Engineering (top) | Shipping value, product-minded engineering | DX + AI working |

This pyramid explains why the three fractures matter strategically, not just tactically. Most AI advice is tactical: “use this prompt,” “try this tool,” “configure agents this way.” CTOs correctly object that tactical advice has two problems: volatility (it’s obsolete in weeks) and applicability (it doesn’t fit my team). The pyramid gives you a frame to evaluate any tactical AI decision.

The fractures? They’re all happening at Layer 2, but they’re caused by gaps in Layer 1. If your DX foundation doesn’t include deliberate learning practices, the skill tax accumulates unchecked. If your hiring process hasn’t adapted, you’re building teams on a broken signal. If AI fluency is left to individuals rather than treated as a team capability, the 99% Problem is your default state.

Don’t skip layers.

What I’m doing about it

I don’t have this figured out. But after 25 years building engineering teams and now being back in the trenches writing Go at HikmaAI and Wishew, here’s where I’ve landed.

On the skill tax: I’ve started distinguishing between what Anthropic calls “performance tasks” and “learning tasks.” When I’m working in Go, AWS, CI/CD (domains I know well), I let Claude Code run freely. But when I’m learning something new, I force myself to slow down. I ask for explanations, not implementations. I deliberately debug before asking AI for help. It feels slower because it is slower. That’s the point.

On hiring: I don’t have a complete answer, but I know the direction. Pair programming sessions where the candidate can (and should) use AI, followed by modification challenges: “now change the error handling approach.” The question isn’t “can you code?” It’s “do you understand what you’ve built?”

On the 99% Problem: at Wishew, I’ve codified my AI workflow into Skills, review gates, and quality checks that anyone on the team can adopt. The scaffolding matters more than the talent of the individual user. Make the right way the easy way, and the gap shrinks.

And underneath all of this sits the two-layer system I described in January: Claude generates, Gemini validates. It remains my strongest defense against the Perception Gap. If I feel productive but the reviewer catches issues, the data wins over the feeling.

What engineering leaders should be asking

If you’re leading an engineering team in 2026, four questions.

Are your juniors learning or just producing? Track time-to-independence on new domains, not code output. If engineers can’t debug without AI after six months, the skill tax is real in your org.

Does your hiring process test understanding or output? If a candidate can’t modify their own solution under pressure, their take-home is meaningless. Budget the time for pair programming. Right now it’s the only reliable signal left.

How wide is your AI fluency gap? Survey how your team actually uses AI (not whether they use it, but how). If you find a 7x thinking-time gap between your best and worst AI users, that’s a training problem, not a tools problem.

Are you building on all three layers? Don’t invest in AI tools (Layer 2) if your DX foundation (Layer 1) is broken. Fix CI, fix environments, fix onboarding first. AI multiplies whatever’s already there, including the chaos.

The uncomfortable part

AI now makes it easier to produce code and harder to develop the judgment needed to produce good code. Same tools, same access, completely unequal outcomes. The feedback loops that used to build expertise are eroding, and most of us haven’t noticed yet because the output looks fine.

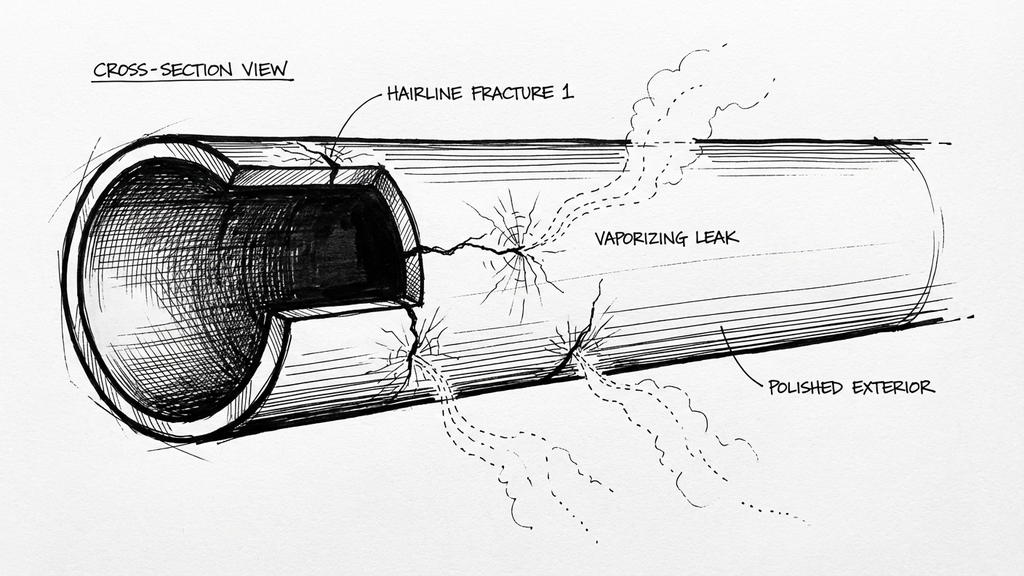

The talent pipeline isn’t breaking in one dramatic failure. It’s developing hairline fractures, in learning and in evaluation, that are easy to miss individually. Together, they’re reshaping what “being good at software” even means.

The teams that come out of this well won’t be the ones that adopted AI fastest. They’ll be the ones that protected the friction required for actual learning, even when everyone around them was celebrating how much faster things felt.

In my previous article, I wrote: “In the AI era, productivity has shifted from writing speed to validation speed.” I’d update that now: In the AI era, the most valuable engineering skill isn’t using AI. It’s knowing when not to.

Sources and further reading

- Anthropic RCT on AI-assisted learning - RDEL Newsletter #133, 2026

- CTO Craft TMW #471, Andy Skipper - AI and hiring, 2026

- Alberto Romero, “The 99% Problem” - The Algorithmic Bridge, 2026

- Pragmatic Engineer AI Tooling Survey - ~1,000 respondents, 2026

- Luca Rossi, “The New Pyramid” - Refactoring Newsletter, 2026

- METR Study, July 2025 - AI and developer productivity

- My previous articles: AI Productivity Paradox, Modular Skills

Methodology note: This article was written with AI assistance (Claude Code). The sources were collected in my personal knowledge base over two weeks of newsletter processing. The synthesis, opinions, and editorial choices are mine. Claude helped with structuring and drafting, and I used my own humanizer pipeline to remove AI-isms from the prose. If you spot one I missed, I owe you a coffee.