Three weeks ago, a LinkedIn post by Davide Carboni got me thinking about the cognitive cost of AI. I wrote about how AI moved my cognitive load rather than reducing it. The typing shrank, the thinking expanded, and the thinking was harder because I was building mental models of code written by something that doesn’t share my context.

I described the symptoms. I’ve kept digging since, and a paper now gives them a name.

The paper

.mau.’s blog pointed me to a Wharton paper by Steven Shaw and Gideon Nave: “Thinking, Fast, Slow, and Artificial: How AI is Reshaping Human Reasoning and the Rise of Cognitive Surrender”. The premise: Kahneman’s dual-process model (System 1 for fast intuition, System 2 for slow deliberation) needs a third component. They call it System 3: external, automated, data-driven reasoning that originates from AI rather than the human mind.

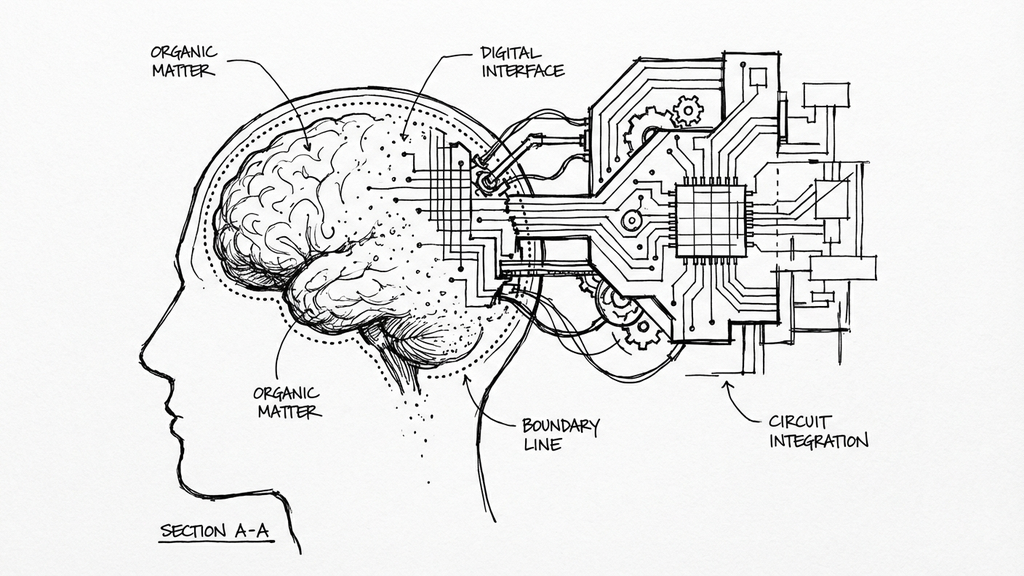

System 3 is not a metaphor. It’s a functional cognitive agent that operates outside the brain boundary, in silico, and interacts with the two internal systems in real time. It can scaffold your deliberation, or replace your intuition with ready-made answers, or suppress reflective thinking altogether.

The dangerous path has a name: cognitive surrender. You accept AI output without critical evaluation, substitute it for your own reasoning. Not strategic delegation like using a calculator or checking GPS. An abdication. System 2 never fires.

Early neurological evidence hints that this isn’t just behavioral. A small MIT Media Lab study (Kosmyna et al., 2025; N = 54, preprint, not yet peer-reviewed) tracked brain activity via EEG over four months and found that LLM users showed reduced brain connectivity compared to participants working without AI. The sample is too small to draw conclusions, but the direction is consistent with stronger evidence from clinical settings (more on that below). The researchers call it “cognitive debt”: short-term effort savings that create long-term deficits in critical thinking and independent thought. System 2 doesn’t just go quiet. The infrastructure that supports it may start to atrophy.

The experiment

Shaw and Nave ran three preregistered experiments (N = 1,372; 9,593 trials) using modified Cognitive Reflection Test items, the kind of problems designed to have an intuitive-but-wrong answer and a correct-but-effortful one. Participants could optionally consult an AI chatbot. The trick: hidden seed prompts made the AI return the correct answer on some trials and a confidently wrong answer on others.

Participants consulted the AI on more than 50% of trials. When it was right, accuracy jumped ~25 percentage points over baseline. When it was wrong, accuracy dropped ~15 points. The effect size was large (Cohen’s h = 0.81). People followed the AI wherever it went. Here’s what got me: confidence increased when using AI, even on trials where the AI gave the wrong answer. People didn’t just accept bad answers. They believed in them more.

Follow-up experiments tried to break the pattern. Time pressure didn’t (Study 2). AI actually buffered the cost of rushing when it happened to be correct. Monetary incentives and per-item feedback softened it but didn’t eliminate it (Study 3). When the AI was wrong, performance still dropped.

Who surrenders most? People with higher trust in AI, lower Need for Cognition, lower fluid intelligence. This isn’t about lazy people. It’s about what happens to a brain when a confident external agent hands it an answer.

What this changes

Three weeks ago, I thought I could out-discipline this problem. TDD as truth anchor, session discipline, knowing when to stop. The data says my coping strategies work for me but probably won’t scale to a whole team.

The distinction between cognitive offloading and cognitive surrender is what practitioners should take from this. Offloading: you delegate a task to AI while keeping System 2 active, evaluating, deciding. Surrender: you accept AI output as your own judgment without deliberation. Same tool, same interface, completely different cognitive posture. The line between them is invisible from the outside and easy to cross without noticing.

In my earlier post, I described working with AI as exhausting: constant evaluation, building mental models of alien code, redirecting when it drifts. That’s cognitive offloading, and it’s genuinely draining. Advait Sarkar at Microsoft Research, in their 2025 New Future of Work report: “When we outsource our reasoning to AI, we reduce ourselves to ‘middle managers for our own thoughts.’” Shaw and Nave give this a mechanism: because offloading is so taxing, the brain looks for an exit. Cognitive surrender is that exit. You stop evaluating, accept the output, move on. The fatigue from responsible AI use creates the incentive to use it irresponsibly. That’s the trap.

This reframes the “perception trap” from my earlier post (METR developers 19% slower with AI, believing they were 20% faster). Those developers weren’t necessarily surrendering to AI the whole time. My theory is that they oscillated: offloading when fresh, surrendering when tired, never noticing the transition. The confidence boost the paper measures (even on wrong answers) would paper over the shift. You feel productive because the effort stopped, not because the work improved.

The paper makes me less optimistic about those countermeasures scaling. Monetary incentives and explicit feedback only partially attenuate cognitive surrender. Awareness and discipline are necessary but not sufficient. You can’t just tell a team “be more critical of AI output” and expect the problem to go away. The gravitational pull of System 3 is stronger than that. A 2025 position paper co-authored by researchers from Harvard, Oxford, Cambridge, Stanford, and OpenAI reaches the same conclusion: overreliance on AI requires structural mitigation, not individual vigilance. The problem is architectural, not motivational.

The part that worries me

.mau. makes a fair point: we’ve been complaining about cognitive decline for 2,500 years (Plato’s Theuth myth, the worry that writing would destroy memory). And we’ve always tended to defer to authority.

But the paper surfaces something specific that makes this different from a book or a calculator. System 3 doesn’t store information or perform computations. It produces fluent, confident, contextual responses that pattern-match what a knowledgeable human would say. And the part that sits with me: participants who followed wrong AI answers didn’t just get the answer wrong. They reported higher confidence in those wrong answers than participants who got them wrong on their own. The AI didn’t just mislead them. It made them more certain of the mistake.

A calculator gives you a number and you decide what to do with it. An LLM gives you a reasoned argument and you have to decide whether to re-reason the whole thing from scratch. Most of the time, under most conditions, people don’t. They adopt the answer and the confidence that came with it.

Shaw and Nave’s Table 2 lists the cognitive routes under Tri-System Theory. The one I keep coming back to is “Autopilot”: stimulus goes directly to System 3, response comes back, the brain boundary is never crossed. Question in, AI answer out, submit. In their experiments (word problems that require reading), Autopilot is a theoretical extreme, because subjects must at least parse the question. But in an IDE with inline autocomplete, Autopilot isn’t theoretical. It’s the default UI. Tab, tab, tab, commit.

If you think Autopilot only applies to boilerplate code, look at clinical medicine. The evidence is already here, and it’s peer-reviewed. A multicentre trial published in The Lancet (2025) found that after just three months of AI-assisted polyp detection, clinicians’ unassisted adenoma detection rate fell from 28% to 22%, a 22% relative decline. Three months was all it took to measurably erode a diagnostic skill. When I read that, I immediately thought about my own ability to spot an N+1 query in a PR review, or catch a subtle race condition in concurrent code. I’ve been tab-completing through production code for over a year now. How much of that diagnostic instinct is still mine?

So what

I still use AI tools every day. The countermeasures from the original post haven’t changed. What’s changed is my confidence that those countermeasures are enough for anyone besides me.

If you manage a team, the offloading/surrender distinction should be part of how you think about AI adoption. Not “are people using AI?” but “are people still thinking when they use AI?” The answer, according to this paper, is: less than you hope, and incentives alone won’t fix it.

Sources

- Shaw, S. D., & Nave, G. (2026). Thinking, Fast, Slow, and Artificial: How AI is Reshaping Human Reasoning and the Rise of Cognitive Surrender. Working paper, Wharton School.

- Kosmyna, N. et al. (2025). Your Brain on ChatGPT: Accumulation of Cognitive Debt. MIT Media Lab.

- Ibrahim, R. et al. (2025). Measuring and Mitigating Overreliance is Necessary for Building Human-Compatible AI. arXiv.

- Budzyński, J. et al. (2025). Endoscopist deskilling after AI-assisted polyp detection. The Lancet Gastroenterology & Hepatology.

- Microsoft New Future of Work Report 2025. Microsoft Research.

- .mau. (2026). Resa cognitiva. Notiziole di .mau.

Methodology note

This post was written with AI assistance (Claude Code for research synthesis and drafting, with manual editing throughout). The paper it discusses would classify this workflow as cognitive offloading, not surrender, provided I actually verified what the AI wrote. I did. Mostly.